The self-trivialisation of software development

Software developers have a weird professional instinct: we try to make our own work unnecessary.

Not in some noble, self-sacrificing way. It’s more reflexive than that. You hit a problem, you solve it, and then you package that solution so nobody (including you) ever has to think about it again. Import, call, move on. The history of software is basically a history of people making hard things disappear behind one-line function calls.

This has worked spectacularly well for sixty years. But now we’re doing it with AI, and the thing we’re trivialising isn’t a specific technical problem anymore. It’s the act of writing code itself. That changes the equation.

Solving things once, forever

The pattern started early. Programmers moved from machine code to assembly to COBOL, each step hiding the layer below. By the 1970s, subroutine libraries let you use someone else’s solution for common operations instead of writing your own. This was mostly vendor-driven and closed off, but the idea was already there: solve it once, reuse it everywhere.

The real shift came with free software and open source in the 80s and 90s. Richard Stallman wrote in the GNU Manifesto that “the fundamental act of friendship among programmers is the sharing of programs.” Once the internet made that sharing frictionless, collaboration exploded. If someone found a bug in a shared module, the fix reached everyone who used it. Whole problem domains started getting solved once and abstracted away permanently.

C.A.R. HoareThere are two ways of constructing a software design: One way is to make it so simple that there are obviously no deficiencies, and the other way is to make it so complicated that there are no obvious deficiencies.

Each generation of tooling pushed the boundary of what counts as “easy.” Operating systems made CPU scheduling someone else’s problem. Garbage collectors eliminated entire categories of memory bugs. Web frameworks turned complex HTTP request handling into convention-driven scaffolding. What used to take a team weeks to build, you can now get running in minutes with NextJS, Laravel, or .NET.

By the 2010s, package managers like npm and Maven had grown so large that the default approach to any common problem was to search for a library first and write code second. Developers became integrators and configurators more than inventors.

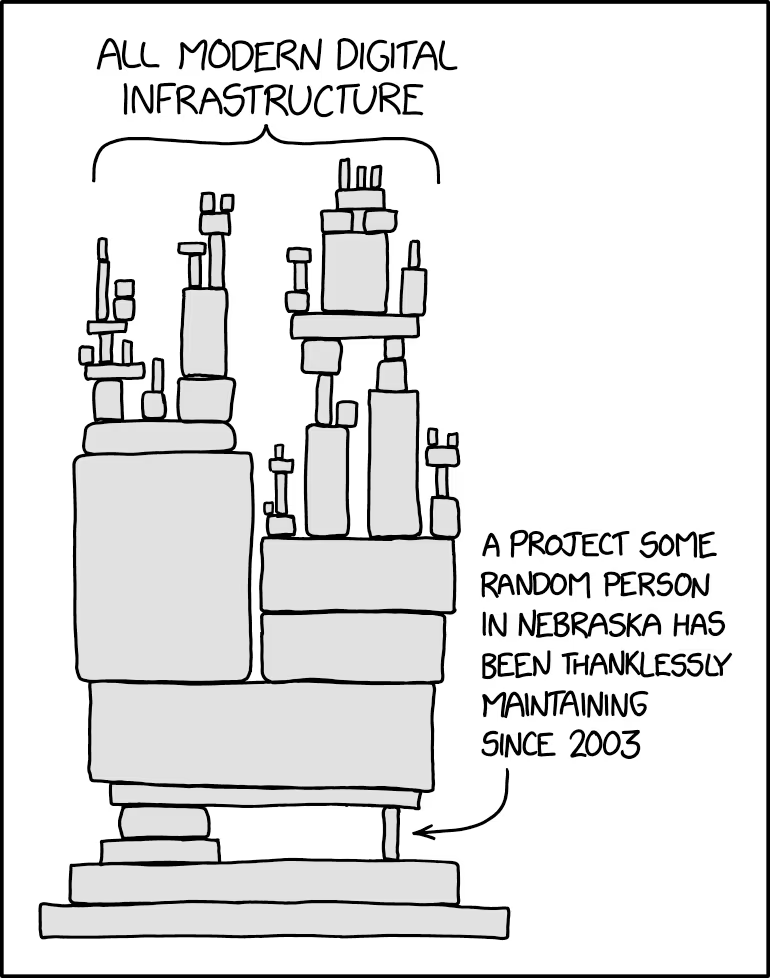

The absurd endpoint of this was the left-pad incident in 2016. An 11-line JavaScript function for padding strings was published as an npm package. When the author removed it from the registry, thousands of projects broke. Even string padding had been trivialised into a dependency. Nobody bothered solving it themselves.

That incident, although serious, was kind of funny, because it revealed something real: modern software is layers upon layers of other people’s solved problems. Pull out one tiny layer and the whole stack can buckle.

Why we do this to ourselves

Larry Wall, the creator of Perl, named three virtues of programmers: laziness, impatience, and hubris. All three drive self-trivialisation.

Laziness (the productive kind) means spending effort now to avoid effort later. If a task is repetitive, you script it. If you’ve solved a problem, you package it so you never face it again. Impatience means you can’t stand slow processes, so you automate deploys, set up hot reloading, build CI pipelines. Hubris means you want to build something so clean and general that nobody can criticize it, and ideally, something other people adopt.

There’s a business case too. If a company can use an off-the-shelf solution instead of funding a six-month build, they will. The entire SaaS industry is built on selling trivialised complexity. Stripe trivialises payments. Auth0 trivialises authentication. Vercel trivialises deployment. Startups today can launch sophisticated products by composing these services and focusing only on their actual idea.

Jeff Atwood put it bluntly on Coding Horror:

The best code is no code at all. Every new line of code you willingly bring into the world is code that has to be debugged, code that has to be read and understood, code that has to be supported.

There’s also the reliability argument. A widely-used, community-maintained library is almost certainly more reliable than your hand-rolled version. Every new line of code is a liability. Using a proven solution means fewer of your own bugs, fewer of your own edge cases, fewer of your own late nights.

So the incentives all point the same direction: trivialise, share, reuse.

But AI changes what’s being trivialised

Here’s where the story takes a turn.

Everything I’ve described so far, libraries, frameworks, package managers, cloud services, those all trivialise specific technical problems. Somebody solved TCP/IP so you don’t have to. Somebody solved user authentication so you don’t have to. The developer’s job shifted from solving those problems to composing the solutions. But the job of actually writing the composition, the glue code, the business logic, the wiring, that remained firmly human work.

AI coding assistants like GitHub Copilot, Cursor, and Claude Code are different. They don’t trivialise a specific problem domain. They trivialise the act of writing code across all domains. When you describe what you want in natural language and an LLM produces working code, the abstraction layer isn’t between you and memory management or between you and HTTP parsing. It’s between you and programming itself.

This is a qualitatively different kind of trivialisation. Previous rounds moved developers up the stack. From machine code to algorithms, from algorithms to application logic, from application logic to system composition. Each time, you still needed to write code at the new level. AI threatens to make the writing part optional at every level simultaneously.

That doesn’t mean developers disappear. But it does mean the value of “I can write code” drops, while the value of “I know what code should be written” rises. The scarce skill shifts from implementation to judgment.

What stays non-trivial

If AI can generate working code from descriptions, what’s left?

A few things, and they’re worth thinking about carefully.

Knowing what to build. The hardest part of most software projects has never been the typing. It’s figuring out what the system should actually do, which tradeoffs to make, and how to decompose a vague business need into something specific enough to implement. AI doesn’t help much here because the problem isn’t technical. It’s about understanding people, organizations, and constraints.

Recognizing when the abstraction breaks. This is the one that worries me most. Every abstraction leaks eventually. When your ORM generates a terrible query plan, you need to understand SQL. When your Kubernetes pod keeps crashing, you need to understand containers, networking, maybe even Linux internals. If developers learn to build on top of AI-generated code without understanding what it’s doing, the failures will be harder to diagnose, not easier. The left-pad incident broke builds. Misunderstood AI-generated code can break production systems in ways nobody on the team can explain.

Maintaining what already exists. Nothing stays trivial on its own. Libraries need maintainers. Abstractions need updating when underlying platforms change. There are engineers whose full-time job is improving the Linux kernel, or the Python runtime, or Postgres internals. These people manage complexity at the core so everyone else can pretend it’s simple. That work doesn’t go away. If anything, it gets more important as the layers multiply.

Deciding what not to build. With AI making implementation cheaper, the temptation to build more will increase. But the maintenance cost of software doesn’t drop just because the writing cost did. Every system you create is a system you have to keep running. The developers who’ll be most valuable are the ones who can say “we don’t need this” with confidence, because they understand the problem well enough to know a simpler solution exists.

The real risk isn’t obsolescence

In my time in software, I’ve already watched a couple rounds of “this will replace developers” come and go. WordPress was going to do it. No-code tools like Bubble and Webflow were going to do it. None of them did, because new layers of complexity always emerged.

AI might be different in degree, but I don’t think it’s different in kind. The frontier of what counts as “easy” will move again, and developers will move with it. The job in five years probably looks quite different from today, the same way today’s job looks different from writing CGI scripts in Perl.

The risk I’m more concerned about is a loss of depth. If a generation of developers learns to build software primarily by prompting AI and composing API calls, without ever understanding how the underlying systems work, the profession hollows out. You end up with a lot of people who can build things when everything works, and very few who can fix things when something breaks in an unfamiliar way.

Edsger Dijkstra said “simplicity is prerequisite for reliability.” That’s true. But it only holds if someone understands the complexity being hidden. If nobody does, you don’t have simplicity. You have fragility with a nice interface.

Where this leaves us

The self-trivialisation of software development isn’t new. It’s been the defining pattern of the field since its beginning. Each generation solved hard problems, packaged those solutions, and moved on to harder ones. That’s genuinely how progress works.

What’s new is that AI is trivialising the packaging step itself. The tool we use to solve and share problems (writing code) is becoming the thing being automated. That’s a loop closing in on itself.

My honest take: the developers who’ll thrive are the ones who treat AI as a power tool, not a replacement for understanding. Use it to move faster, absolutely. Let it handle the boilerplate. But stay curious about what’s happening underneath. Know why your system works, not just that it works.

The history of this field suggests that making hard things easy always creates new hard things. That hasn’t changed. The question is whether we’ll be ready for the new hard things when they arrive, or whether we’ll have trivialised away the skills we need to face them.

Today’s challenges, once solved, will be tomorrow’s one-line imports. That’s the deal. It’s always been the deal. The only thing that changes is what counts as the challenge.